Turning a Grain of Sand into a Microchip

5. January 2023

Advances in digitization depend heavily on one of the tiniest particles found on our planet – the grain of sand. It is the basis of the highly sought-after semiconductor that many modern technologies run on. Let us take a closer look at how a semiconductor is made.

It is the new gold of the economy and one of the building blocks of our civilization. According to the United Nations, sand is the most in-demand natural raw material after water. No wonder, as it can be processed into glass, concrete, rubber, tiles, and porcelain, among other things. The technology industry also relies on this raw material. Smartphones, laptops, and even cars use small, ultra-high-performance microchips, also known as semiconductors for automation and artificial intelligence. In their basic form, these chips consist of micron-grade quartz sand.

However, sand itself is not the only crucial component in chip production, but the silicon dioxide it contains. To extract the purest possible silicon from it, oxygen is removed from the sand under high heat and with the addition of carbon.

Why Silicone?

The synonym for microchips is “semiconductor”, which comes from the property of the material they are made of. This is because silicon can both pass on electrical current and interrupt the flow of power under certain conditions – due to its atomic structure.

A single pure-silicon crystal is non-conductive at room temperature because it has four outer electrons and thus does not have any free electron carriers. Its extremely conductive properties are due to a process known as doping. Small amounts of foreign atoms (usually boron or phosphorus) are incorporated into a silicon crystal lattice, each of which has one more or one less outer electron. Transistors are created by combining an insulating layer of silicon oxide with a layer of electrically conductive polysilicon. These control the electrical voltage and current and are thus the most important component of the microchip.

A Complex Production

In the most powerful semiconductors, billions of transistors are installed and interconnected. This allows highly complex circuits to be accommodated on a surface of just a few square millimeters. But for these to work, the layout and design of each chip must be well thought out. The availability of special design tools makes it possible to digitally design the integrated circuits in a three-dimensional structure. This allows each layer to be viewed separately, and the interaction between them to be simulated. The digital blueprint is then used in the form of photomasks as a kind of template for chip production.

To ensure the highest possible quality during production, state-of-the-art technologies are used for inspection at every step. In addition, the chips may only be manufactured under the strictest conditions in a cleanroom: a dust-free environment in which the temperature and humidity are stable. In approximately ten liters of air, a maximum of one single dust particle larger than 0.5 micrometers may be present. The ventilation and filtration processes are correspondingly complex. Yet only with such high precision and quality can these sophisticated microchips be created.

That is everything you need to know about the prerequisites, but how exactly is a microchip created?

A Multilayered Process

Once the silicon has been extracted from the quartz sand and cleaned, the silicon rods are cut into wafer-thin slices using a special sawing technique. These wafers form the basis of a complex coating process.

First, a non-conductive oxide layer is created on the wafer at about 1000 degrees Celsius, onto which light-sensitive photoresist is then spread. Special equipment illuminates the wafer through a photomask which transfers parts of the previously digitally-created stencil onto the silicon wafer. The exposed portion is developed, exposed, and etched away; the unexposed areas remain and protect the underlying layer.

This process – coating of oxide, photosensitizing, masking, exposing, and etching away – is repeated several times during chip fabrication until the desired integrated circuit is completely built. Meanwhile, an electrically conductive layer of polysilicon is applied, and doping is performed, in which foreign atoms are introduced into the exposed silicon to affect its conductivity.

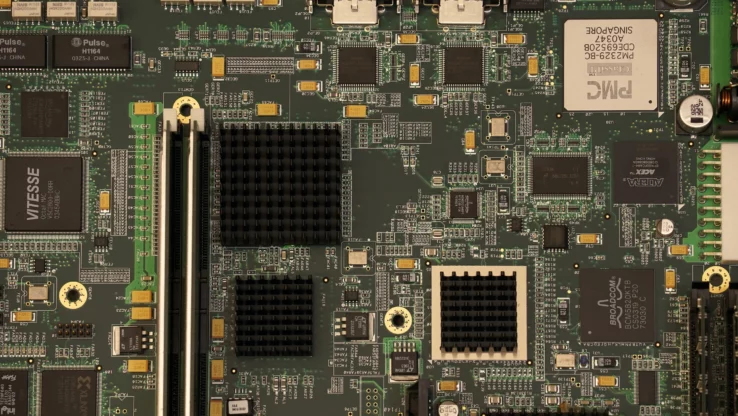

Lastly, contact holes are etched to provide access to the conductive layers. The chip surface is then polished to micrometer precision using a chemical-mechanical process. The finished chips then must only be sawn out of the wafers. Depending on the diameter, this produces several dozen to several thousand chips per wafer, each of which is only a few square millimeters to centimeters in size. In the final step, assembly, these miniature chips are placed in a package and contacted. The finished semiconductor products can now be attached to circuit boards via various connection types.

Demand Increases Foreseeable in the Future

There is an increasing demand for technology with each passing year. Consumers expect better performance, low energy consumption, and availability. That’s where microchips come in and why their demand has skyrocketed in recent years – and will continue to rise in the future.

In addition, there is the added issue that sand as a natural raw material is not available in endless supply. While large quantities of sand are also deposited on the world’s coasts every year, global demand far exceeds the amount produced by weathering.

The semiconductor shortage is expected to remain for many years to come. Apart from the increasing scarcity of sand, another reason is that chip production is so complex and costly. Only a few companies worldwide have the necessary know-how and manufacturing capabilities to produce high-quality semiconductors. Nevertheless, in order to ensure supply, a stringent sourcing strategy is required that enables planning and at the same time can react to unforeseen market developments.